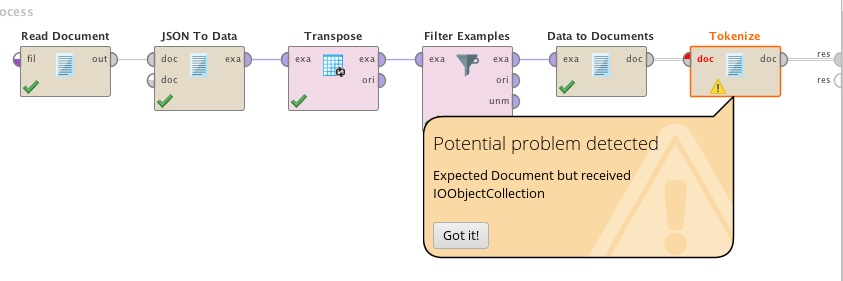

I'm trying to tokenize text in a dataset but the 'Tokenize' node complains about the wrong data type. The dataset is a JSON file converted into data, transposed and filtered and finally ran through a 'data to documents' node yet the tokenize module complains 'Expected document but received IOObjectCollection. What am I missing?

<?xml version="1.0" encoding="UTF-8"?><process version="9.8.001">

<context>

<input/>

<output/>

<macros/>

</context>

<operator activated="true" class="process" compatibility="9.8.001" expanded="true" name="Process">

<parameter key="logverbosity" value="init"/>

<parameter key="random_seed" value="2001"/>

<parameter key="send_mail" value="never"/>

<parameter key="notification_email" value=""/>

<parameter key="process_duration_for_mail" value="30"/>

<parameter key="encoding" value="SYSTEM"/>

<process expanded="true">

<operator activated="true" class="text:read_document" compatibility="8.2.000" expanded="true" height="68" name="Read Document" width="90" x="45" y="34">

<parameter key="file" value="/Users/alkopop79/Downloads/facebook-greglorincz_JSON_2021_march_8/messages/inbox/peterkeszthelyi_ilkoxu6ymg/message_1.json"/>

<parameter key="extract_text_only" value="true"/>

<parameter key="use_file_extension_as_type" value="true"/>

<parameter key="content_type" value="txt"/>

<parameter key="encoding" value="SYSTEM"/>

</operator>

<operator activated="true" class="text:json_to_data" compatibility="8.2.000" expanded="true" height="82" name="JSON To Data" width="90" x="179" y="34">

<parameter key="ignore_arrays" value="false"/>

<parameter key="limit_attributes" value="false"/>

<parameter key="skip_invalid_documents" value="false"/>

<parameter key="guess_data_types" value="true"/>

<parameter key="keep_missing_attributes" value="false"/>

<parameter key="missing_values_aliases" value=", null, NaN, missing"/>

</operator>

<operator activated="true" class="transpose" compatibility="9.8.001" expanded="true" height="82" name="Transpose" width="90" x="313" y="34"/>

<operator activated="true" class="filter_examples" compatibility="9.8.001" expanded="true" height="103" name="Filter Examples" width="90" x="447" y="34">

<parameter key="parameter_expression" value=""/>

<parameter key="condition_class" value="custom_filters"/>

<parameter key="invert_filter" value="false"/>

<list key="filters_list">

<parameter key="filters_entry_key" value="id.contains.content"/>

</list>

<parameter key="filters_logic_and" value="true"/>

<parameter key="filters_check_metadata" value="true"/>

</operator>

<operator activated="true" class="text:data_to_documents" compatibility="8.2.000" expanded="true" height="68" name="Data to Documents" width="90" x="581" y="34">

<parameter key="select_attributes_and_weights" value="false"/>

<list key="specify_weights"/>

</operator>

<operator activated="true" class="text:tokenize" compatibility="8.2.000" expanded="true" height="68" name="Tokenize" width="90" x="715" y="34">

<parameter key="mode" value="non letters"/>

<parameter key="characters" value=".:"/>

<parameter key="language" value="English"/>

<parameter key="max_token_length" value="3"/>

</operator>

<connect from_op="Read Document" from_port="output" to_op="JSON To Data" to_port="documents 1"/>

<connect from_op="JSON To Data" from_port="example set" to_op="Transpose" to_port="example set input"/>

<connect from_op="Transpose" from_port="example set output" to_op="Filter Examples" to_port="example set input"/>

<connect from_op="Filter Examples" from_port="example set output" to_op="Data to Documents" to_port="example set"/>

<connect from_op="Data to Documents" from_port="documents" to_op="Tokenize" to_port="document"/>

<connect from_op="Tokenize" from_port="document" to_port="result 1"/>

<portSpacing port="source_input 1" spacing="0"/>

<portSpacing port="sink_result 1" spacing="0"/>

<portSpacing port="sink_result 2" spacing="0"/>

</process>

</operator>

</process>